The front-end intelligence layeryour OTel stack is missing

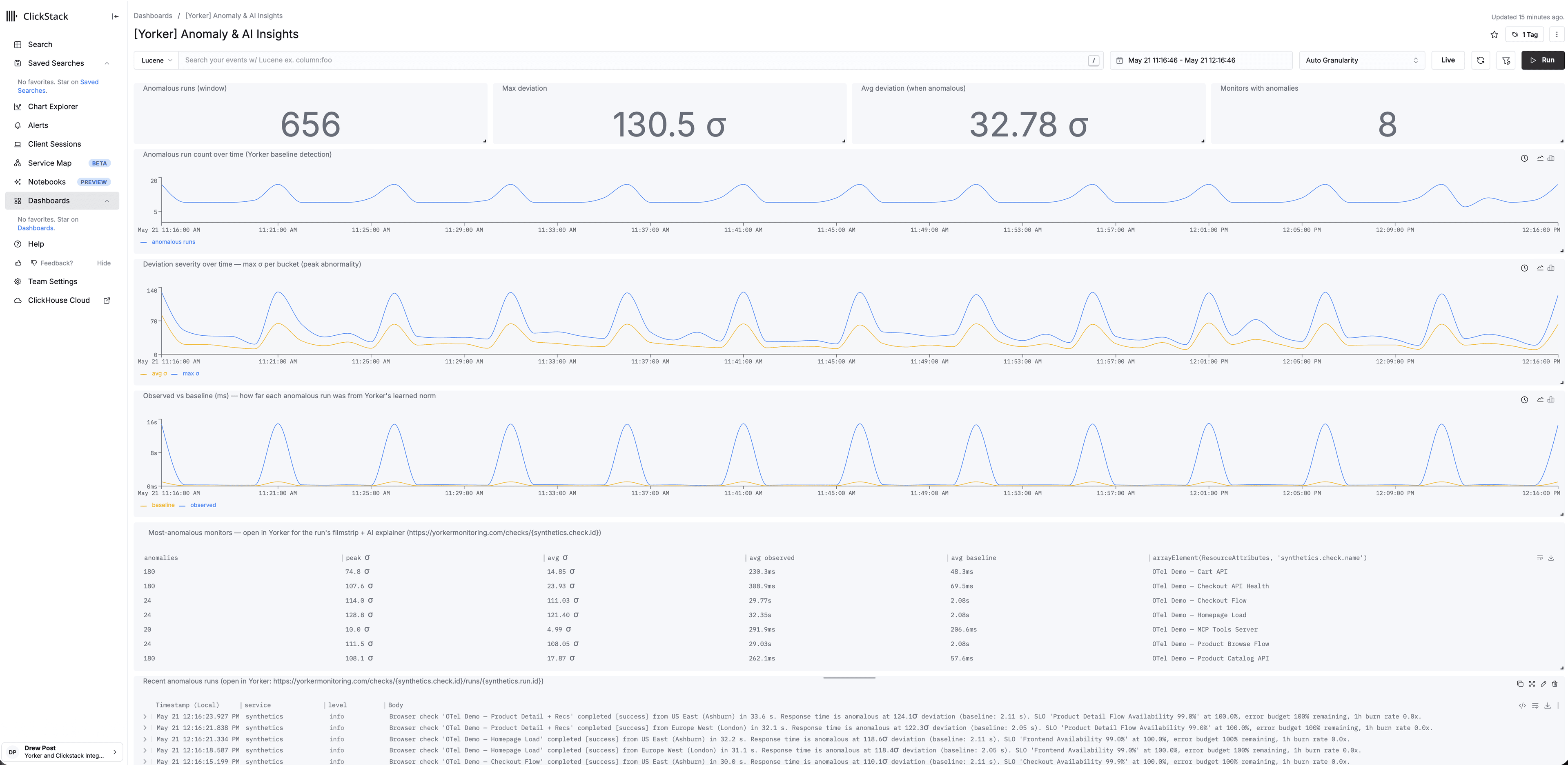

And the fuel that makes your AI ops tools actually work. Structured synthetic intelligence (pre-correlated, anomaly-scored, OTel-native) flowing directly into your existing stack.

Not metrics. Intelligence.

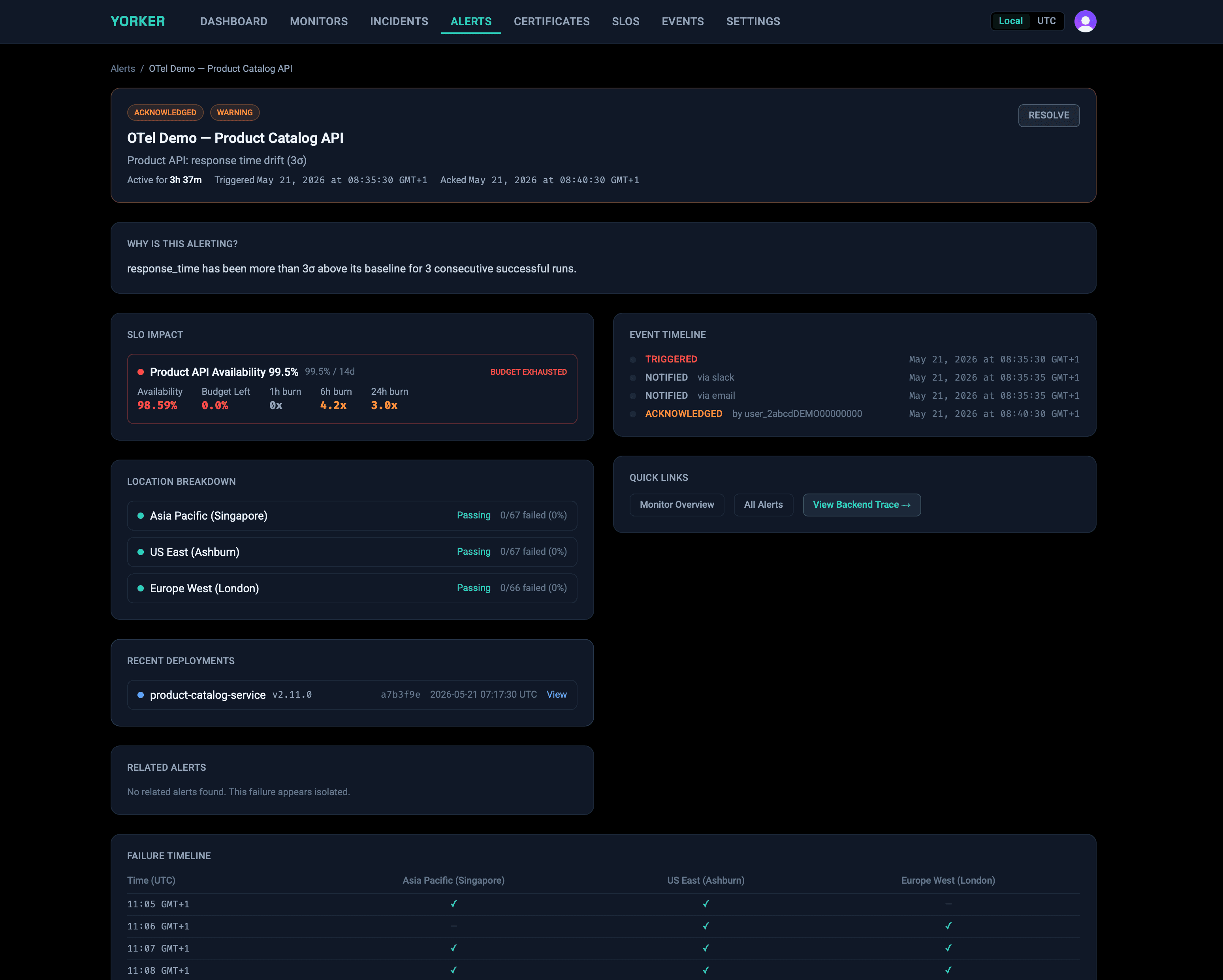

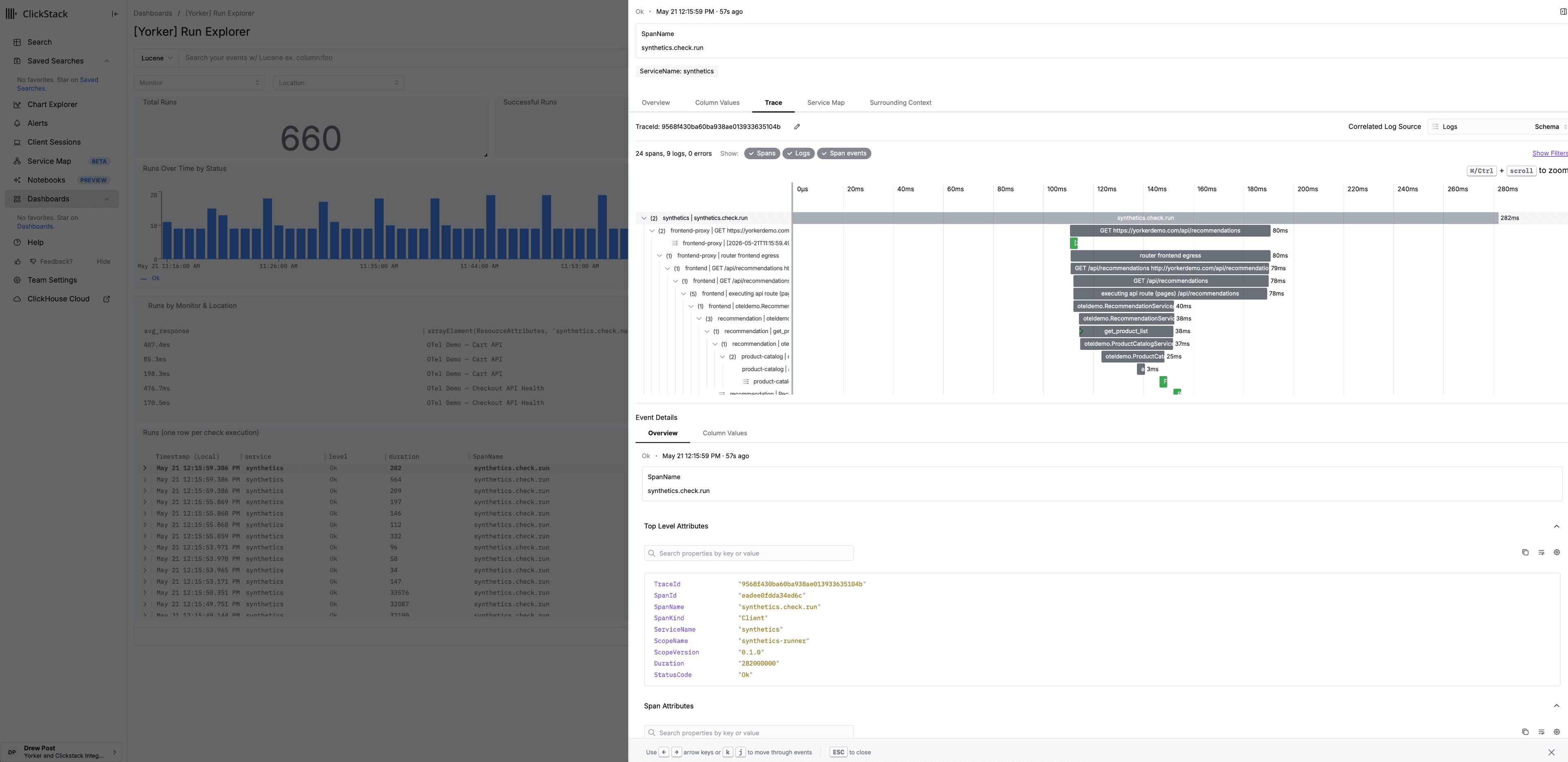

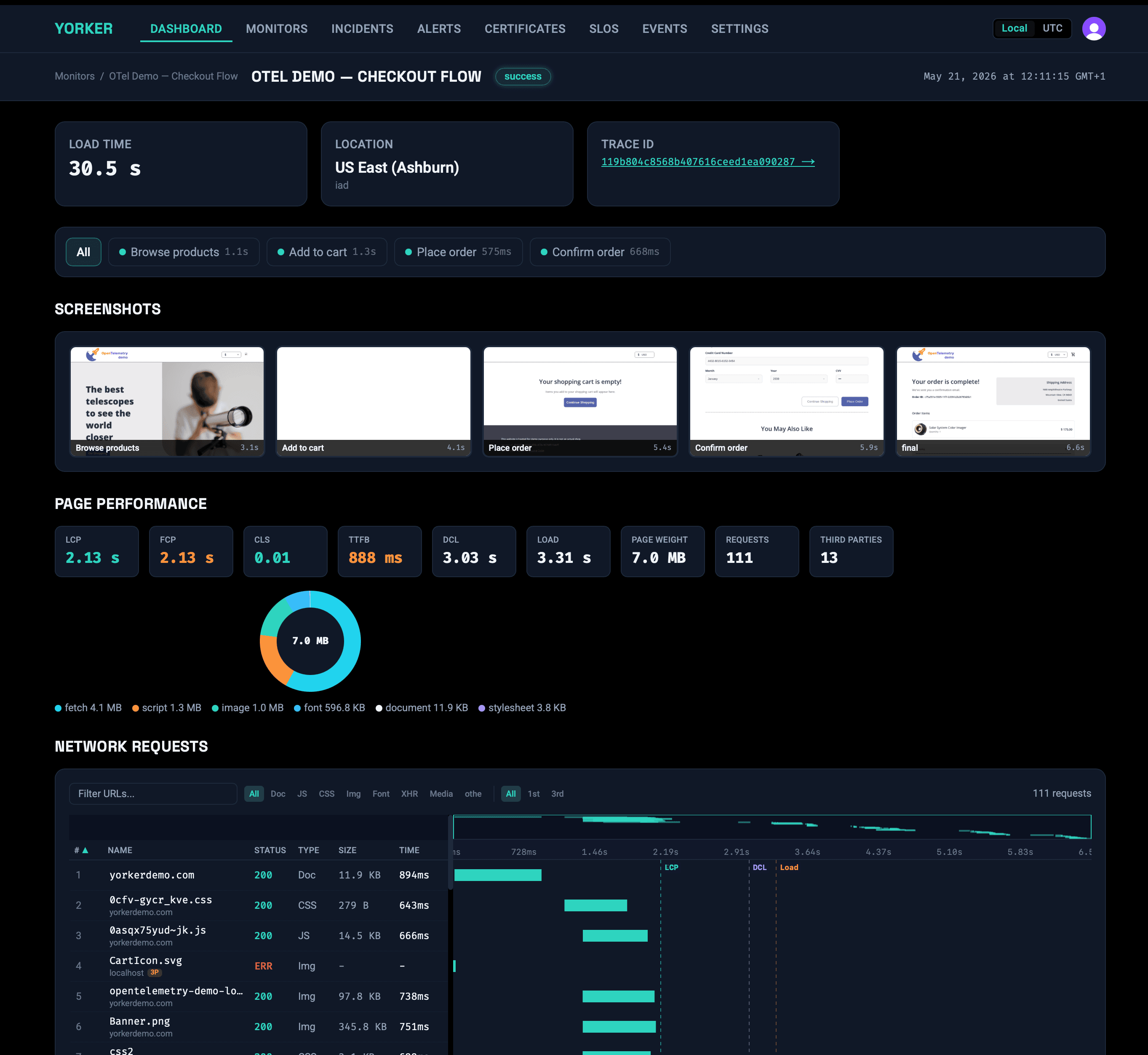

Basic synthetic monitoring emits a response time and a pass/fail. Yorker emits a rich OTLP insight pack on every check: dependency attribution and screenshot URLs on the check.run span, full timing breakdowns as metrics, and structured check.completed log events with anomaly deviation attached when behavior drifts from per-metric, per-location baselines, as standard OTel traces, metrics, and logs, plus W3C traceparent headers injected into HTTP requests for backend trace correlation.

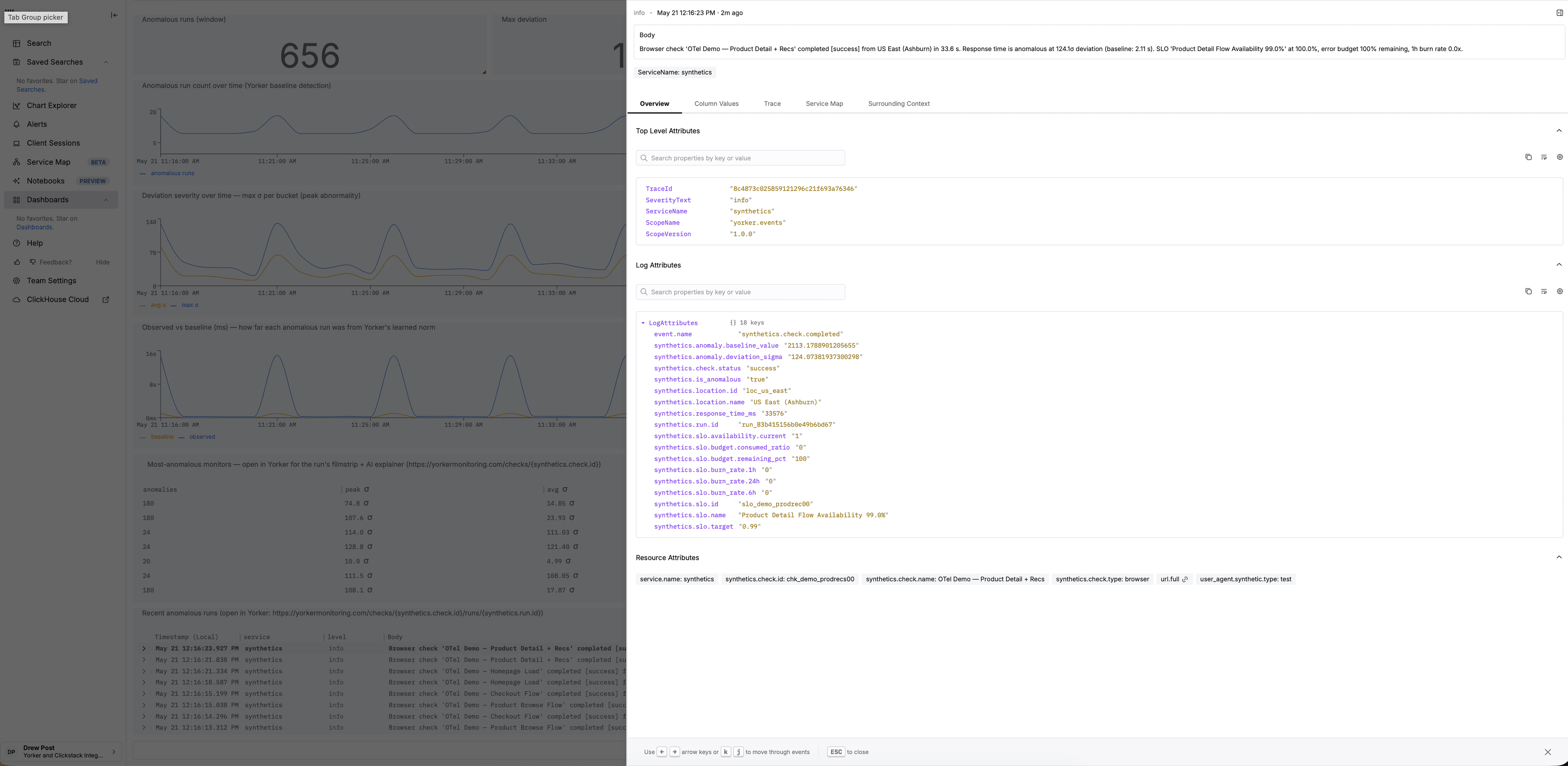

Anomaly attributes on every check.completed event

Every metric is scored against a 14-day rolling baseline, per location, per hour-of-day. The synthetics.check.completed log event fires on every run; when behavior deviates, deviation in σ is attached as a log attribute.

Dependency attribution

Third-party count, total bytes, and domain list emitted as OTel span attributes on every browser check. See cdn.tagmanager.net in your ClickHouse table, not just a number.

Cross-monitor correlation

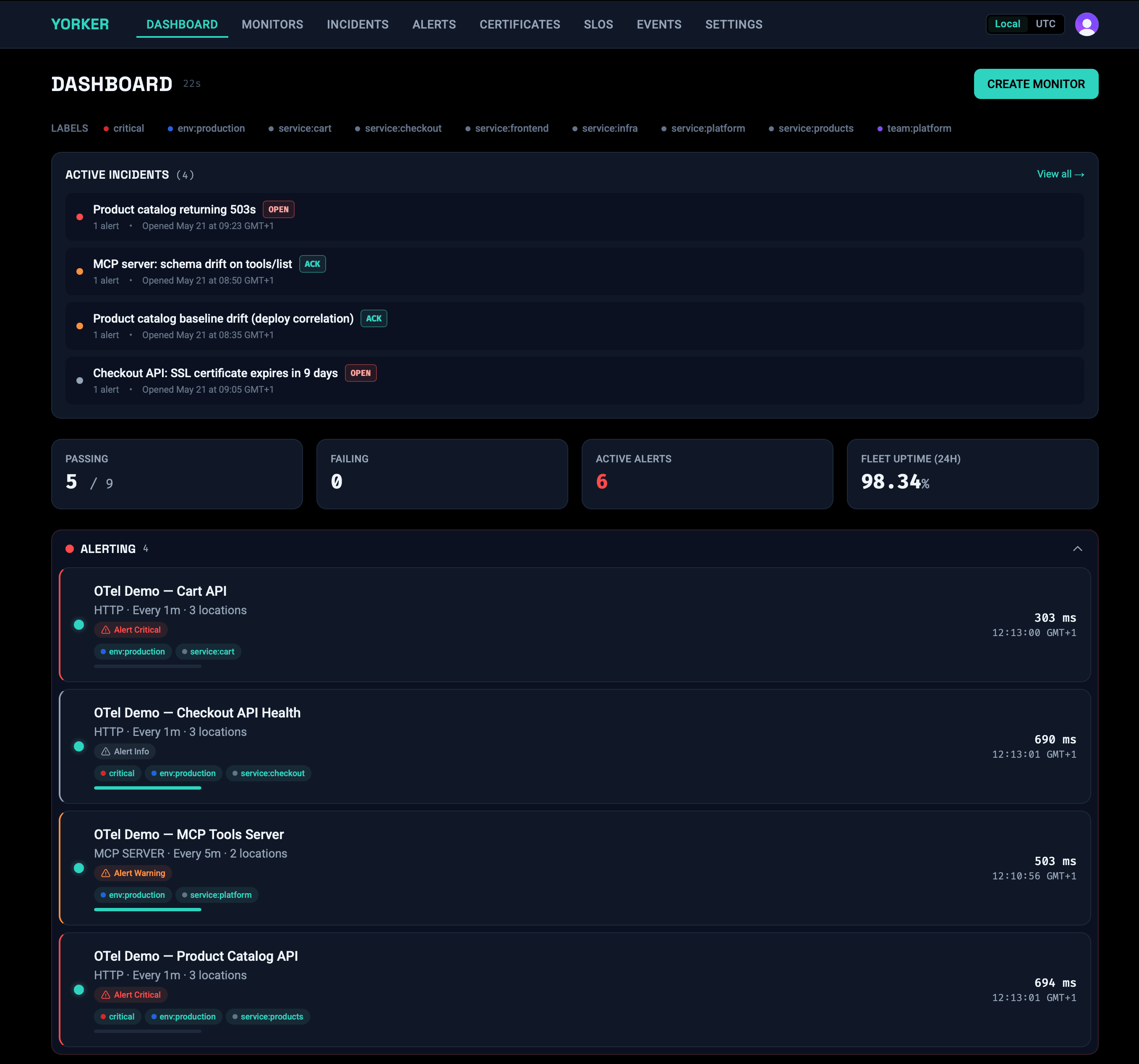

When two or more browser monitors fail within a five-minute window and observe the same third-party dependency, Yorker emits a synthetics.correlation.detected log event with the affected check IDs. Co-occurrence signal across your monitor portfolio, structured for notebook queries. Browser checks only: the signal is derived from network-summary data the browser executor captures.

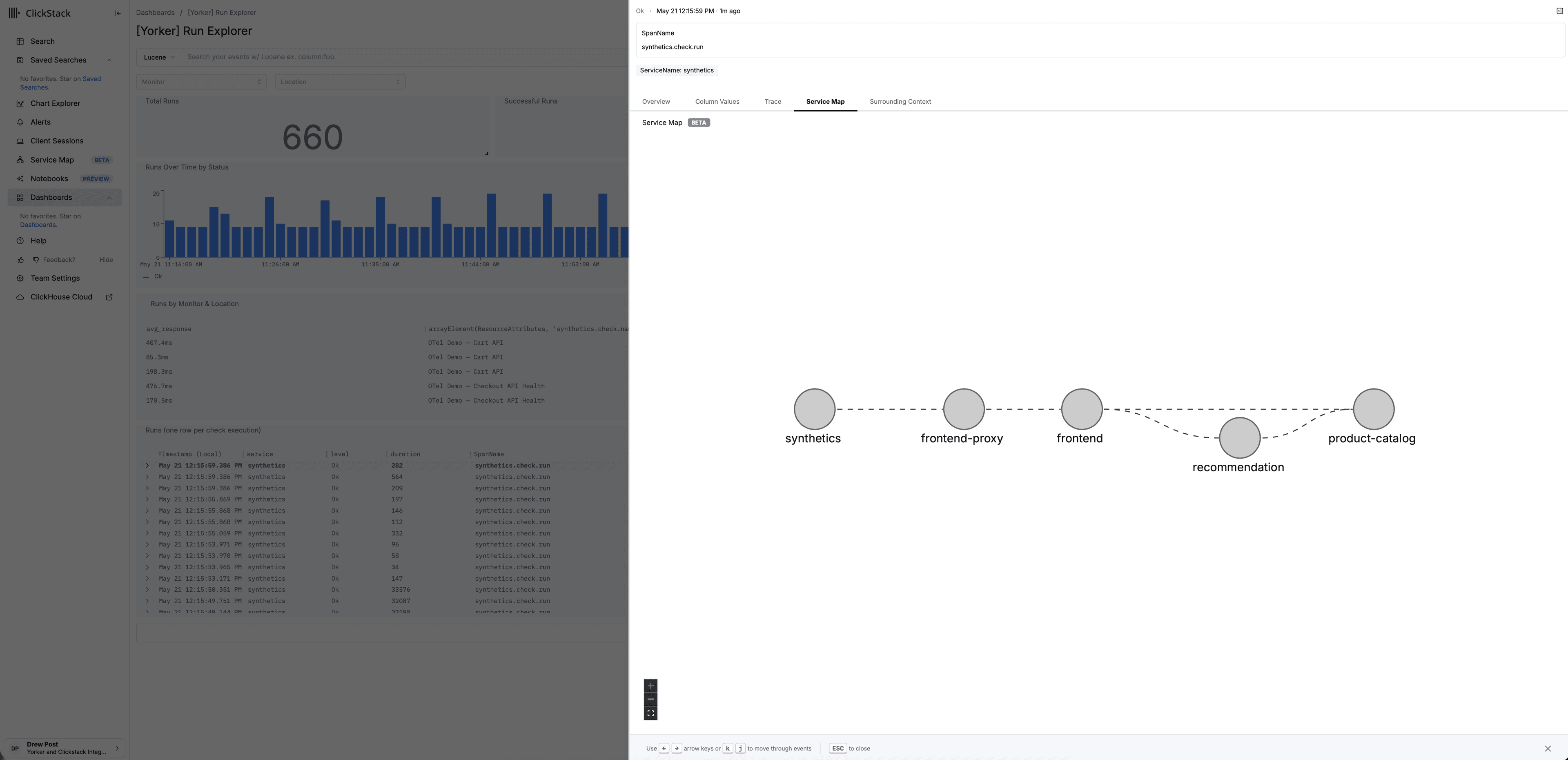

Screenshot URLs in traces

Browser check screenshots are stored and linked directly in the OTel span. Pull up the filmstrip from inside Grafana, a ClickStack incident view, or your runbook.

W3C trace propagation

Synthetic browser checks inject traceparent headers into every request. Your backend picks them up. The synthetic check and the backend request share a single distributed trace.

TLS context in every check

Certificate expiry, issuer chain, and fingerprint emitted as span attributes. Your on-call dashboard knows the cert expires in 4 days before a user gets a browser warning.

Yorker is the synthetic signalClickStack and HyperDX were waiting for.

ClickHouse's observability stack gives you fast, open SQL over your telemetry. Yorker fills the synthetic-monitoring gap with spans, metrics and log events that land natively in ClickStack/HyperDX, complete with W3C traceparent propagation so a failing checkout links straight to the backend trace that explains it.

Point Yorker at your HyperDX OTLP endpoint and one-click provision the 8 pre-built dashboards into self-hosted HyperDX or ClickStack Cloud.

Why a synthetic monitoring tool built OTLP-first, for the ClickHouse/ClickStack era, looks different from the platform-era incumbents.

Same OTLP signal also flows into

Monitors aren't silos.Shared failures are shared signals.

That ad network script loaded via tag manager, the one that never goes through your release process, just added 600ms to six different user journeys simultaneously. Yorker sees the pattern across monitors, not per-monitor in isolation.

- Request-level attribution, across monitors

Payload weight and latency tracked per third-party domain across every browser check. When cdn.adnetwork.com degrades, all six affected monitors surface it together.

- Baseline anomaly alerts on dependencies you don't deploy

Anomaly detection operates at the dependency level, not just the check level. Get alerted when a third-party script changes behavior, before your users feel it.

- Pattern detection across your monitor portfolio

When multiple browser monitors fail within a five-minute window and share a third-party dependency, Yorker surfaces the co-occurrence automatically as one log event per distinct correlation slice. Structured signal to investigate, not six separate alerts to correlate manually.

Your ops tools are only as goodas what you feed them.

Automated oncall tools, causal analysis platforms, ClickStack AI Notebooks, PagerDuty runbooks: they reason over the data you give them. Without pre-correlated, trace-linked front-end intelligence, they're working with half the picture.

Yorker gives them what they don't have: the user-facing layer, already processed and attributed, with the right OTel attributes and trace headers to cross-link with everything else in your stack.

- Deviation in σ attached as log attributes when behavior drifts

- Third-party attribution already labelled

- Cross-monitor correlation events emitted on browser-check co-failures

- Screenshot URLs embedded in spans

- W3C traceparent for backend cross-linking

- Structured logs with full assertion context

Built for how engineering teamswork in 2026

Agentic workflows in both directions: Yorker as a data source your agents consume, and Yorker as a tool that monitors your AI infrastructure.

Monitor your AI tools

HTTP 200 doesn't mean your AI feature is working. Yorker's MCP server check validates what your Model Context Protocol servers actually return: tool availability, output correctness, and latency baselines.

- Tool availability: is the MCP server responding?

- Output validation: does the response contain expected values?

- Latency baselines: is your model taking longer than usual?

Designed to be used by agents

Full CLI with deploy, validate, test, and status commands. API-first design. YAML config that lives in your repo alongside your code. Coding assistants speak fluent Yorker: they create and manage monitors the same way they write infrastructure.

$ yorker deploy

✓ 2 monitors created

$ yorker status

✓ Checkout Flow passing

✓ API Health passing

$ yorker test checkout

✓ Test run complete · 1.2s# Define monitors alongside your app code

project: my-app

monitors:

- name: API Health

type: http

url: https://api.example.com/health

frequency: 1m

assertions:

- type: status_code

value: 200

- name: Checkout Flow

type: browser

script: ./monitors/checkout.ts

frequency: 5m

locations: [loc_eu_west, loc_eu_central, loc_ap_northeast]And yes, it's all code.

Plain YAML. Terraform-style plan/apply. Git-native. CI/CD-ready. If you're running a serious operation in 2026, this is table stakes, and Yorker ships it.

- Preview changes with --dry-run before applying

- Clean up orphaned monitors with --prune

- Validate config in CI with yorker validate

- Secrets stay in env vars, never in your repo

14 Global Locations

US, Europe, Asia Pacific, South America, Africa, and Oceania. Private locations behind your firewall available on every paid plan.

- Ashburn

- Dallas

- Los Angeles

- Toronto

- São Paulo

- London

- Paris

- Frankfurt

- Stockholm

- Singapore

- Tokyo

- Mumbai

- Sydney

- Johannesburg

- Free tier: 10,000 HTTP + MCP runs + 1,500 browser runs/mo, 1 hosted location

- No credit card required to start

- Paid plan unlocks all 14 hosted locations, correlation analysis, and alert triage

- Private locations at 50% off hosted run rates (paid: up to 2, Enterprise: unlimited)

Close your observability blind spot

Start with the dashboard or go straight to code.

Or start from the terminal

npx @yorker/cli init